For Whom the Duck Quacks

The Schutzduck Origin Story

Thanks to the team at Scrub capital for publishing this in The Differential. Check it out!

In 2010, as a medicine resident with strong opinions and nowhere to put them, I started publishing the Schutzblog to make sense of the struggles of healthcare, seeking feedback from others and being helpful where I could. Fifteen years and a hundred posts later, my former CEO Rushika Fernandopulle called to praise something I’d recently written, saying it was perhaps my best work. It occurred to me that he loved it, not because it was new, but because it solved his problem right then. That’s when it hit me: as a periodical, Substack surfaces what’s recent. What my readers need is what’s relevant to them. Closing that gap is what eventually became Schutzduck.

But first, built in an afternoon, came the Schutzbot: a librarian in the form of a chat widget sitting in the Schutzblog. Trained on its contents, the bot would ask readers to share their problems and then recommend the relevant articles. Every person who tried it ignored the article recommendations and tried to get full-throated advice. It turns out that reading is hard work even if you are lucky enough to find what you need. You have to wrestle with a dense article and then apply it to your idiosyncratic situation, alone, left with lingering doubts that you missed or misinterpreted something. But what if you could talk to a perfectly patient library instead: ask it questions, work through multiple solutions to your unique problems and then come away more confident in your next steps? The most useful thing to build wasn’t a librarian, it was an oracle.

But before I could build the oracle, I had to wrestle with my own fears, of failure AND success. The clinician in me wanted to first do no harm. I’d been reading about the risks of these bots hallucinating, people developing dangerous relationships with them leading to real harm. What if my advice bot got it wrong or made things worse for someone already struggling? What about my hard fought content? Would the AI companies just absorb it or had they already? And if it did work, could anyone replicate it by ingesting my writing? Even deeper — I currently work as an advisor; was I building my own replacement?

I sought advice from wise, generous colleagues who assured me that some concerns were overblown and that nothing I would do would be more harmful than the status quo. They also reminded me that my data was likely out there, so I’d need something proprietary if I wanted to protect what I built. Most of all, with tools like Claude at my disposal, I could build this myself and it might actually be fun. After some honest reflection, mixed with a little YOLO (encouraged by the very prototype I was building), I decided to practice what I preach: try something small, get feedback, make a change and then repeat, handling the problems as they actually come.

My first baby step was to share a version of the Schutzbot with a more permissive “give advice” prompt with my wife and her visiting friend that I had just helped through a career pivot. They laughed as it did sound like me, and begrudgingly admitted that it was helpful. Emboldened, I decided to keep going, but this new thing needed a new name.

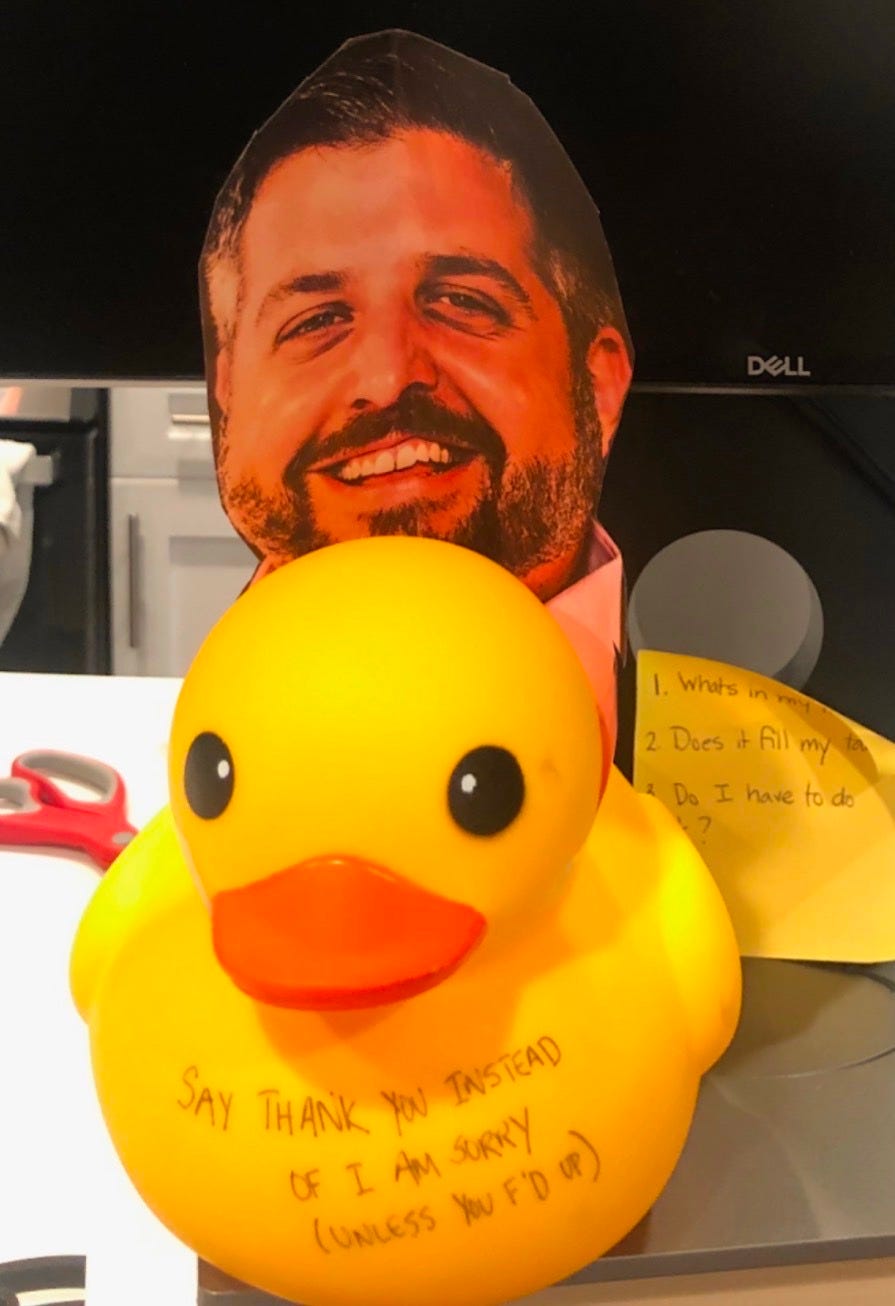

The answer had been sitting in my memory since 2020. While leaving Iora Health, a close colleague asked what she was going to do without me to call for advice. Partly to help her, partly to ease my own guilt about leaving, I told her about rubber duck debugging: practice of talking your problem out loud to a rubber duck until the solution emerges. She sent me back this:

Tickled by the new name, I launched Schutzduck to friends and blog subscribers. Within days the feedback came pouring in: “sounds exactly like you,” “gets to the heart of the matter quickly,” “pushed back on me in a way ChatGPT never would.” That last one made me smile. Most AI advice is dangerously affirming. It validates your framing and sends you on your way. I’ve spent 15 years doing the opposite for people by reframing the problem, getting to the root, lovingly calling people on their bullshit. Now the duck was doing it too.

The next logical step was to build an actual website around the duck itself so I could offer users history, privacy, improve the technology and capture some of the value I was creating. Only one problem, I am not a software engineer. But now we have Claude, and I could combine its generative capabilities with the discipline I’d learned from the best development team in health tech.

The first days were painful as I grappled with Claude, like working with a genius with a drinking problem: you never knew which one you were going to get today. While it knew syntax, I knew what the duck needed to do. It was always certain the answer was right around the corner. I remembered the decision we’d made a week earlier that proved otherwise. Slowly, what had started as taking dictation morphed into a kind of apprenticeship. I was being quietly trained without realizing it, all while using words I did not understand only a month earlier. Never before had work felt so fun. We would make a change → get feedback → fix what’s broken → do more marketing to bring in new users → make more changes, repeat. Every physician reading this will recognize the loop–it’s clinical care, just with a different stack.

The good news is this loop is learnable. The tools exist, the methodology is reproducible, and if you have a body of work and a point of view, you have the raw material. Which raises the obvious question: if I can build this, can’t anyone? And if anyone can build it, what stops someone from just asking ChatGPT instead? Duck users say otherwise.

A general purpose model knows everything and believes nothing.

It will validate your framing, affirm your plan, and send you on your way feeling good. The duck will tell you you’re solving the wrong problem. That’s not a feature. That’s a philosophy — fifteen years of hard-won beliefs about how healthcare businesses actually fail, why smart people avoid decisions they already know they need to make, and what it takes to get the medicine in the patient. You can’t prompt your way to that.

It’s not enough to make a chatbot that sounds like you. It has to believe what you believe. The most defensible AI products won’t be the broadest. They’ll be the ones that believe something.